Lower bounds by negative adversary method

December 16, 2013 5 Comments

Are some questions harder than others?

Last week I quantified hardness of answering a question with a quantum computer as the quantum query complexity. I promised that this model would allow us to develop techniques for proving lower bounds. In fact, in this model there are two popular tools: the polynomial method, and the (negative) adversary method. In this week’s post, I’d like to highlight the latter.

The original version of the quantum adversary method, was proposed by Ambainis [Amb00]. The method starts by choosing two sets of inputs on which takes different values. Then the lower bound is determined by combinatorial properties of the graph of the chosen inputs. Some functions, such as sorting or ordered search, could not be satisfactorily lower-bounded by the unweighted adversary method. Hoyer, Neerbek, and Shi [HNS02] weighted the input pairs and obtained the lower bound by evaluating the spectral norm of the Hilbert matrix. Barnum, Saks, and Szegedy [BSS03] proposed a complex general method and described a special case, the spectral method, which gives a lower bound in terms of spectral norms of an adversary matrix. Ambainis also published a weighted version of his adversary method [Amb03]. He showed that it is stronger than the unweighted method but this method is slightly harder to apply, because it requires one to design a weight scheme, which is a quantum counterpart of the classical hard distribution on inputs. Zhang [Zha05] observed that Ambainis had generalized his oldest method in two independent ways, so he united them, and published a strong weighted adversary method. Finally, Laplante and Magniez [LM04] used Kolmogorov complexity in an unusual way and described a Kolmogorov complexity method. Spalek and Szegedy [SS06] unified the above methods into one equivalent method that can be formulated as a semidefinite program (SDP).

I will present an alternative development observed by Ben Reichardt in terms of optimization problems [Rei11]. Our approach will use SDP duality extensively, but we will not prove the duals explicitly, referring the interested reader to previous literature instead [SS06, Rei09].

From certificate complexity to the adversary method

The certificate complexity of a function is the non-deterministic version of query complexity and can be found by solving the following optimization problem:

We think of as a bit-vector with the

-th component telling us if index

is in the certificate or not. The constraint is simply the contrapositive of the definition of certificate complexity: if

then there must be at least one bit of overlap on the certificates of

and

such that they differ on that bit. The discrete nature of the bit-vector makes certificate complexity a difficult optimization, and it is natural to consider a relaxation:

Note the suggestive name: the above equation corresponds to the dual of the adversary method. Its more common form is the primal version:

Since the adversary bound is a relaxation of the certificate complexity, we know that for all

. In fact, two even stronger barrier stands in the way of the adversary method: the certificate and property testing barriers. The certificate barrier is that for all functions

,

, and if

is total, then we have

[Zha05, SS06]. The property testing barrier is for partial functions, where every zero-input is of Hamming distance at least

from every one-input. In that case the method does not yield a lower bound better than

.

Negative adversary method

To overcome these barriers, Hoyer, Lee, and Spalek [HLS07] introduced the negative adversary method. The method is a relaxation of the adversary method that allows negative weights in the adversary matrix:

Note that we started with certificate complexity and relaxed that to get the dual of the adversary method. We took the dual to get the adversary method, and then relaxed the adversary method to get the negative adversary method. Since we relaxed in both directions in two different ways, the negative adversary method is not clearly related to the certificate complexity.

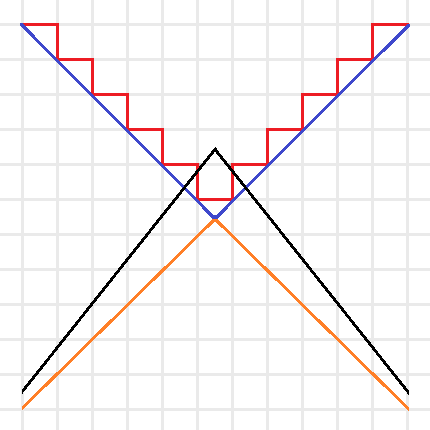

Intuitive sketch of the optimization problems for (Red),

(Blue),

(Orange), and

(Black). Note that the red and blue lines are the boundaries of minimization problems, thus their feasible regions are above the boundaries, and the orange and black lines are the boundaries of maximization problems, thus their feasible regions are below the boundaries.

We can simplify this idea with the above picture. The certificate complexity involved a minimization over the integers, so its feasible region is above the boundary drawn by the red line. The optimization problem for tries to find the lowest point in the feasible region. The adversary method replaces the integer constraint by real values, and is represented by the blue line. Since the optimization of

is still a minimization, this allows us to find lower points in the bigger feasible region. Since the goal of the method is to provide a lower bound, we instead look at

as a maximization problem (with boundary in orange), now the feasible region is below the orange line and any feasible point provides a lower bound. Note that by duality this lower bound is always less than or equal to a feasible point in

’s region and thus less than or equal to the certificate complexity. The negative adversary method’s feasible region is represented by the black line. It takes the maximization in

and removes the non-negativity constraint, expanding the feasible region and thus making it possible to find points with

. Finding natural problems where we have a separation though, has proved to be difficult. In particular, for important problems beyond the certificate complexity barrier (like the collision problem) our best lower bounds come from the polynomial method, and no one has found an approach that uses the negative adversary method directly. Do you know a natural

such that

or better yet, such that

?

Query complexity and the negative adversary method

Of course, without context the method is not very useful. It is the fact that it lower bounds quantum query complexity that first made it a useful tool. Given a function , we have:

To prove this relation, we think of an adversary holding a quantum superposition over oracle strings instead of the oracle representing a specific input. This

is found by the negative adversary method, and corresponds to any eigenvalue of

with eigenvalue

. Thus, any algorithm for solving the problem starts with the initial state (refer to the query complexity post for background on notation):

We then consider the reduced density matrix that the adversary holds. This state begins with no entanglement, and in order to learn

we must create a lot of entanglement in the state. Specifically, we define a progress function

and show 3 points:

The first step is obvious from our choice of the adversary’s initial state and progress function. The second step is an application of Cauchy–Schwarz and triangle inequalities. The third-step demands that the algorithm give the correct output: . This is the most difficult step, and the proof goes through a few special choices of operators and an application of Holder’s inequality. We reference the reader to the original paper [HLS07] for details.

Where do we go from here?

Does the negative adversary method answer if some questions are harder than others? Next time we will see that by looking at span program we can show that the negative adversary method characterizes query complexity: . Thus, the method is a great way to show that some questions are more difficult than others. It also behaves well with respect to iterated functions. In particular, to break the certificate complexity barrier, we can iterate the Kushilevitz-Ambainus partial function

on 6 bits, defined as:

- Zero inputs of

are:

,

- One inputs of

are:

.

I leave it as an exercise to the eager reader to use their favorite SDP solver to show that .

This is the second post in a series on quantum query complexity. I originally wrote this post for the CSTheory Community Blog where it was published on August 4, 2011. Unfortunately, that blog is largely defunct, so I decided to repost here with slight modifications.

References

[Amb00] A. Ambainis. Quantum lower bounds by quantum arguments. In Proceedings of the thirty-second annual ACM symposium on Theory of computing, pages 636-643. ACM, 2000, arXiv:quant-ph/0002066v1.

[Amb03] A. Ambainis. Polynomial degree vs. quantum query complexity. In Foundations of Computer Science, 2003. Proceedings. 44th Annual IEEE Symposium on, pages 230{239. IEEE, 2003, arXiv:quant-ph/0305028.

[BSS03] H. Barnum, M. Saks, and M. Szegedy. Quantum decision trees and semidefinite programming. In Proc. of 18th IEEE Complexity, pages 179-193, 2003.

[HLS07] Peter Hoyer, Troy Lee, & Robert Spalek (2007). Negative weights make adversaries stronger STOC arXiv: quant-ph/0611054v2

[HNS02] P. Hoyer, J. Neerbek, and Y. Shi. Quantum complexities of ordered searching, sorting, and element distinctness. Algorithmica, 34(4):429-448, 2002, arXiv:quant-ph/0102078.

[LM04] S. Laplante and F. Magniez. Lower bounds for randomized and quantum query complexity using Kolmogorov arguments. 2004, arXiv:quant-ph/0311189.

[Rei09] Ben W. Reichardt. Span programs and quantum query complexity: The general adversary bound is nearly tight for every boolean function. In 2009 50th Annual IEEE Symposium on Foundations of Computer Science, pages 544-551. IEEE, 2009, arXiv:0904.2759v1 [quant-ph].

[Rei11] Ben W. Reichardt. Quantum query complexity. Tutorial at QIP2011 Singapore, 2011.

[SS06] Robert Spalek and Mario Szegedy. All quantum adversary methods are equivalent. Theory of Computing, 2:1-18, 2006, arXiv:quant-ph/0409116v3.

[Zha05] S. Zhang. On the power of Ambainis lower bounds. Theoretical Computer Science, 339(2-3):241-256, 2005, arXiv:quant-ph/0311060.

” \vec{p}_x as a bit-vector with the j-th component telling us if index j is in the certificate or not.” How might we find decision algorithms that are more efficient than a Busy Beaver?

I’m not completely sure what you are trying to say.

Keep in mind, we are building bounds in a query model, and not actually computing with universal machines. In particular, when you are lower bounding, you usually have your function specified in some convenient way (i.e. given by an oracle, not to be confused with the query oracle that is used inside the computation which you are analyzing). Further, when working with these sort of functions, your domain is restricted to only the computable part, although this can be used to play some nasty tricks.

Of course, if this wasn’t an analysis tool, but an actual computation (i.e. you wanted to write a program that output lower bounds for specific instant-sizes) then there is no reason to expect it to be easily computable, especially when it characterizes query complexity completely.

Pingback: Cataloging a year of blogging: the algorithmic world | Theory, Evolution, and Games Group

Pingback: Span programs as a linear-algebraic representation of functions | Theory, Evolution, and Games Group

Pingback: Closing the gap between quantum and deterministic query complexity for easy to certify total functions | Theory, Evolution, and Games Group